@rasta_pickles . Heh... See what happens when you post a little readily consumable data to go along with the definition of your problem?  You get more solutions and they're usually well tested!

You get more solutions and they're usually well tested!

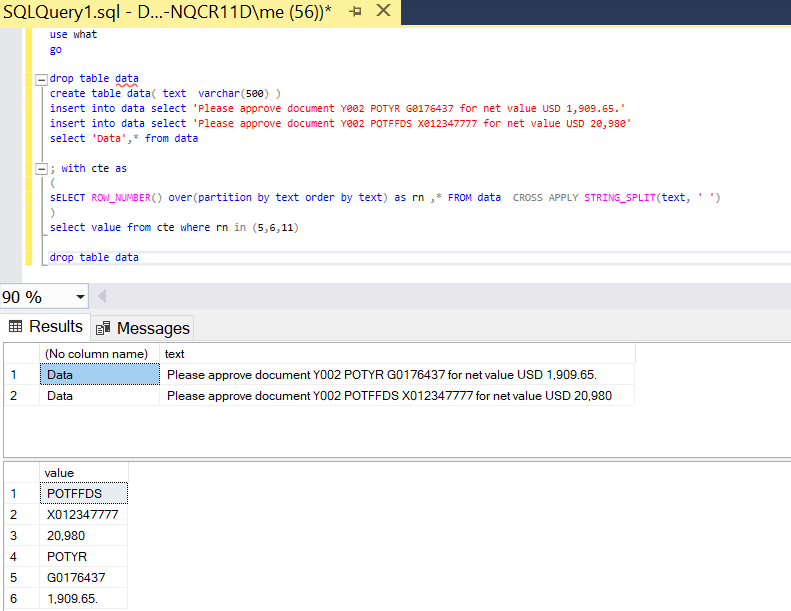

@mike01 certainly has the right idea although he didn't convert the USD column to a numeric and he left the trailing period in the column. It IS hardcoded and there's nothing wrong with that because "hardcoded" is usually faster than more flexible code.

It is also nice to know alternative methods that are a bit more flexible. Here's one of them that uses the readily consumable test data that you were good enough to provide above.

WITH cteEnumSrc AS

(

SELECT RowNum = ROW_NUMBER() OVER (ORDER BY @@SPID)

,descr = LEFT(descr,LEN(descr)-1) --Gets rid of period at end

FROM #Temp

)

SELECT POTYR = MAX(CASE WHEN split.ItemNumber = 5 THEN split.Item ELSE '' END)

,GXX = MAX(CASE WHEN split.ItemNumber = 6 THEN split.Item ELSE '' END)

,USD = CONVERT(MONEY,MAX(CASE WHEN split.ItemNumber = 11 THEN split.Item ELSE '' END))

FROM cteEnumSrc src

CROSS APPLY dbo.DelimitedSplitN4K(descr,' ') split

GROUP BY RowNum

;

The code uses the NVARCHAR() version of the popular DelimitedSplit8K function. You can read about how they work at the article links below. The first article is the original on the subject and the second article explains how a good friend of mine added a wicked performance enhancement way back in 2012.

http://www.sqlservercentral.com/Forums/Topic1101315-203-4.aspx

https://www.sqlservercentral.com/articles/reaping-the-benefits-of-the-window-functions-in-t-sql-2

Here's the DelimitedSplitN4K function. The advantage of the function over the miserable built-in STRING_SPLIT() function is that it returns the ordinal position of each element that was split out of the string, which is quite useful for things like this problem. Obviously, full documentation and history is included in the flower box and I'd appreciate it if you'd include that if you use it in your systems.

CREATE [dbo].[DelimitedSplitN4K]

/**********************************************************************************************************************

Purpose:

Split a given string at a given delimiter and return a list of the split elements (items).

This version is for NVARCHAR(4000) strings.

Notes:

1. Leading and trailing delimiters are treated as if an empty string element were present.

2. Consecutive delimiters are treated as if an empty string element were present between them.

3. Except when spaces are used as a delimiter, all spaces present in each element are preserved.

Returns:

iTVF containing the following:

ItemNumber = Element position of Item as a BIGINT (not converted to INT to eliminate a CAST)

Item = Element value as a NVARCHAR(4000)

Note that this function uses a binary collation and is, therefore, case sensitive.

The original article for the concept of this splitter may be found at the following URL. You can also find

performance tests at this link although they are now a bit out of date. This function is much faster as of Rev 09,

which was built specifically for use in SQL Server 2012 and above andd is about twice as fast as the version

document in the article.

http://www.sqlservercentral.com/Forums/Topic1101315-203-4.aspx

-----------------------------------------------------------------------------------------------------------------------

CROSS APPLY Usage Examples and Tests:

--=====================================================================================================================

-- TEST 1:

-- This tests for various possible conditions in a string using a comma as the delimiter. The expected results are

-- laid out in the comments

--=====================================================================================================================

--===== Conditionally drop the test tables to make reruns easier for testing.

-- (this is NOT a part of the solution)

DROP TABLE IF EXISTS #JBMTest

;

--===== Create and populate a test table on the fly (this is NOT a part of the solution).

-- In the following comments, "b" is a blank and "E" is an element in the left to right order.

-- Double Quotes are used to encapsulate the output of "Item" so that you can see that all blanks

-- are preserved no matter where they may appear.

SELECT SomeID, SomeValue = CONVERT(NVARCHAR(4000),SomeValue)

INTO #JBMTest

FROM ( --# of returns & type of Return Row(s)

SELECT 0, NULL UNION ALL --1 NULL

SELECT 1, SPACE(0) UNION ALL --1 b (Empty String)

SELECT 2, SPACE(1) UNION ALL --1 b (1 space)

SELECT 3, SPACE(5) UNION ALL --1 b (5 spaces)

SELECT 4, ',' UNION ALL --2 b b (both are empty strings)

SELECT 5, '55555' UNION ALL --1 E

SELECT 6, ',55555' UNION ALL --2 b E

SELECT 7, ',55555,' UNION ALL --3 b E b

SELECT 8, '55555,' UNION ALL --2 b B

SELECT 9, '55555,1' UNION ALL --2 E E

SELECT 10, '1,55555' UNION ALL --2 E E

SELECT 11, '55555,4444,333,22,1' UNION ALL --5 E E E E E

SELECT 12, '55555,4444,,333,22,1' UNION ALL --6 E E b E E E

SELECT 13, ',55555,4444,,333,22,1,' UNION ALL --8 b E E b E E E b

SELECT 14, ',55555,4444,,,333,22,1,' UNION ALL --9 b E E b b E E E b

SELECT 15, ' 4444,55555 ' UNION ALL --2 E (w/Leading Space) E (w/Trailing Space)

SELECT 16, 'This,is,a,test.' UNION ALL --4 E E E E

SELECT 17, ',,,,,,' --7 (All Empty Strings)

) d (SomeID, SomeValue)

;

--===== Split the CSV column for the whole table using CROSS APPLY (this is the solution)

SELECT test.SomeID, test.SomeValue, split.ItemNumber, Item = QUOTENAME(split.Item,N'"')

FROM #JBMTest test

CROSS APPLY dbo.DelimitedSplitN4K(test.SomeValue,N',') split

;

--=====================================================================================================================

-- TEST 2:

-- This tests for various "alpha" splits and COLLATION using all ASCII characters from 0 to 255 as a delimiter against

-- a given string. Note that not all of the delimiters will be visible and some will show up as tiny squares because

-- they are "control" characters. More specifically, this test will show you what happens to various non-accented

-- letters for your given collation depending on the delimiter you chose.

--=====================================================================================================================

WITH

cteBuildAllCharacters (String,Delimiter) AS

(

SELECT TOP 256

N'ABCDEFGHIJKLMNOPQRSTUVWXYZabcdefghijklmnopqrstuvwxyz0123456789',

NCHAR(ROW_NUMBER() OVER (ORDER BY (SELECT NULL))-1)

FROM sys.all_columns --Nothing is used from this table except the "Presence of Rows" as a "Pseudo-Cursor".

)

SELECT ASCII_Value = ASCII(c.Delimiter), c.Delimiter, split.ItemNumber, Item = QUOTENAME(split.Item,N'"')

FROM cteBuildAllCharacters c

CROSS APPLY dbo.DelimitedSplitN4K(c.String,c.Delimiter) split

ORDER BY ASCII_Value, split.ItemNumber

;

-----------------------------------------------------------------------------------------------------------------------

Other Notes:

1. Optimized for NVARCHAR(4000) or less. No testing or error reporting for truncation at 4000 characters is done.

2. Optimized for single character delimiter. Multi-character delimiters should be resolved externally from this

function.

3. Optimized for use with CROSS APPLY.

4. Does not "trim" elements just in case leading or trailing blanks are intended.

5. If you don't know how a Tally table can be used to replace loops, please see the following...

http://www.sqlservercentral.com/articles/T-SQL/62867/

6. Changing this function to use a MAX datatype will cause it to run twice as slow. It's just the nature of

MAX datatypes whether it fits in-row or not.

-----------------------------------------------------------------------------------------------------------------------

Credits:

This code is the product of many people's efforts including but not limited to the folks listed in the Revision

History below:

I also thank whoever wrote the first article I ever saw on "numbers tables" which is located at the following URL

and to Adam Machanic for leading me to it many years ago. The link below no longer works but has been preserved here

for posterity sake.

http://sqlserver2000.databases.aspfaq.com/why-should-i-consider-using-an-auxiliary-numbers-table.html

The original article can be seen at then following special site, as least as of 29 Sep 2019.

http://web.archive.org/web/20150411042510/http://sqlserver2000.databases.aspfaq.com/why-should-i-consider-using-an-auxiliary-numbers-table.html#

-----------------------------------------------------------------------------------------------------------------------

Revision History:

Rev 00 - 20 Jan 2010 - Concept for inline cteTally: Itzik-Ben Gan, Lynn Pettis and others.

Redaction/Implementation: Jeff Moden

- Base 10 redaction and reduction for CTE. (Total rewrite)

Rev 01 - 13 Mar 2010 - Jeff Moden

- Removed one additional concatenation and one subtraction from the SUBSTRING in the SELECT List for that tiny

bit of extra speed.

Rev 02 - 14 Apr 2010 - Jeff Moden

- No code changes. Added CROSS APPLY usage example to the header, some additional credits, and extra

documentation.

Rev 03 - 18 Apr 2010 - Jeff Moden

- No code changes. Added notes 7, 8, and 9 about certain "optimizations" that don't actually work for this

type of function.

Rev 04 - 29 Jun 2010 - Jeff Moden

- Added WITH SCHEMABINDING thanks to a note by Paul White. This prevents an unnecessary "Table Spool" when the

function is used in an UPDATE statement even though the function makes no external references.

Rev 05 - 02 Apr 2011 - Jeff Moden

- Rewritten for extreme performance improvement especially for larger strings approaching the 4K boundary and

for strings that have wider elements. The redaction of this code involved removing ALL concatenation of

delimiters, optimization of the maximum "N" value by using TOP instead of including it in the WHERE clause,

and the reduction of all previous calculations (thanks to the switch to a "zero based" cteTally) to just one

instance of one add and one instance of a subtract. The length calculation for the final element (not

followed by a delimiter) in the string to be split has been greatly simplified by using the ISNULL/NULLIF

combination to determine when the CHARINDEX returned a 0 which indicates there are no more delimiters to be

had or to start with. Depending on the width of the elements, this code is between 4 and 8 times faster on a

single CPU box than the original code especially near the 8K boundary.

- Modified comments to include more sanity checks on the usage example, etc.

- Removed "other" notes 8 and 9 as they were no longer applicable.

Rev 06 - 12 Apr 2011 - Jeff Moden

- Based on a suggestion by Ron "Bitbucket" McCullough, additional test rows were added to the sample code and

the code was changed to encapsulate the output in pipes so that spaces and empty strings could be perceived

in the output. The first "Notes" section was added. Finally, an extra test was added to the comments above.

Rev 07 - 06 May 2011 - Peter de Heer

- A further 15-20% performance enhancement has been discovered and incorporated into this code which also

eliminated the need for a "zero" position in the cteTally table.

Rev 08 - 24 Mar 2014 - Eirikur Eiriksson

- Further performance modification (twice as fast) For SQL Server 2012 and greater by using LEAD to find the

next delimiter for the current element, which eliminates the need for CHARINDEX, which eliminates the need

for a second scan of the string being split.

REF: https://www.sqlservercentral.com/articles/reaping-the-benefits-of-the-window-functions-in-t-sql-2

Rev 09 - 29 Sep 2019 - Jeff Moden

- Combine the improvements by Peter de Heer and Eirikur Eiriksson for use on SQL Server 2012 and above.

- Add Test 17 to the test code above.

- Modernize the generation of the embedded "Tally" generation available as of 2012. There's no significant

performance increase but it makes the code much shorter and easier to understand.

- Check/change all URLs in the notes abobe to ensure that they're still viable.

- Add a binary collation for a bit more of an edge on performance.

- Removed "Other Note" #7 above as UNPIVOT is no longern applicable (never was for performance).

**********************************************************************************************************************/

--=========== Define I/O parameters

(@pString NVARCHAR(4000), @pDelimiter NCHAR(1))

RETURNS TABLE WITH SCHEMABINDING AS

RETURN

--=========== "Inline" CTE Driven "Tally Table” produces values from 0 up to 10,000, enough to cover VARCHAR(8000).

WITH E1(N) AS (SELECT N FROM (VALUES (1),(1),(1),(1),(1),(1),(1),(1),(1),(1))E0(N))

,E4(N) AS (SELECT 1 FROM E1 a, E1 b, E1 c, E1 d)

,cteTally(N) AS (--==== This provides the "base" CTE and limits the number of rows right up front

-- for both a performance gain and prevention of accidental "overruns"

SELECT TOP (ISNULL(DATALENGTH(@pString)/2,0)) ROW_NUMBER() OVER (ORDER BY (SELECT NULL)) FROM E4

)

,cteStart(N1) AS (--==== This returns N+1 (starting position of each "element" just once for each delimiter)

SELECT 1 UNION ALL

SELECT t.N+1 FROM cteTally t WHERE SUBSTRING(@pString COLLATE Latin1_General_BIN,t.N,1)

= @pDelimiter COLLATE Latin1_General_BIN

)

--=========== Do the actual split.

-- The ISNULL/NULLIF combo handles the length for the final element when no delimiter is found.

SELECT ItemNumber = ROW_NUMBER() OVER (ORDER BY s.N1)

, Item = SUBSTRING(@pString,s.N1,ISNULL(NULLIF((LEAD(s.N1,1,1) OVER (ORDER BY s.N1)-1),0)-s.N1,4000))

FROM cteStart s

;

You get more solutions and they're usually well tested!

You get more solutions and they're usually well tested!